A group of eight and nine-year-olds sat around tables covered in large sheets of paper, markers in hand, on a Tuesday morning in April in a classroom on the third floor of DREAM Charter School in East Harlem. They were working on a task that the New York City Department of Education had yet to complete: writing the rules for artificial intelligence.

The hand-drawn borders, block lettering, and sporadic misspellings on the posters they created represented concepts that policy staff and education researchers had been discussing for months in conference rooms. “Use your brain first.” “Don’t copy and paste.” “Check your work.” “AI can turn our brains into mush,” a third-grader named David Ortiz said, summarizing the main issue with a directness that no adult in any policy document has quite matched. “It would be no point of school if AI is going to tell you everything.”

An eight-year-old’s statement is impressive. The phenomenon that cognitive scientists refer to as “cognitive offloading”—the process by which machine reasoning gradually replaces human reasoning, leaving the human less skilled, less capable, and less conscious of the loss—was what made David’s discovery remarkable, not because kids are gullible and occasionally surprising. In a recent study, MIT researchers asked three groups to write essays: one group used ChatGPT, one group used Google, and one group used only their minds. With every assignment, the ChatGPT users became more indolent, wrote the most dull essays, and showed the least amount of neurological engagement. Many were merely copying and pasting by the end. Despite having no affiliation with MIT and no funding for research, David had come to a similar conclusion.

| Location | DREAM Charter School, East Harlem, New York City |

|---|---|

| Grade Level | Third Grade (ages 8–9) |

| Activity | Students drafting their own AI use guidelines and guardrails |

| Date | April 2026 |

| Lesson Designer | Lego Education |

| Lead Teacher | Kale Blackshear |

| School Co-CEO | Eve Colavito |

| Key Student Quote | “AI can turn our brains into mush” — David Ortiz, age 8 |

| Core Concept Identified by Students | “Cognitive offloading” — letting AI do the thinking instead of using your own brain |

| NYC DOE Status | Still collecting feedback; finalized AI policy not yet released as of April 2026 |

| School AI Stats | 78% of DREAM staff already using AI tools; goal of 60% student adoption as learning aid |

| Parent Opposition | “Parents for AI Caution” group calling for complete halt to AI in classrooms |

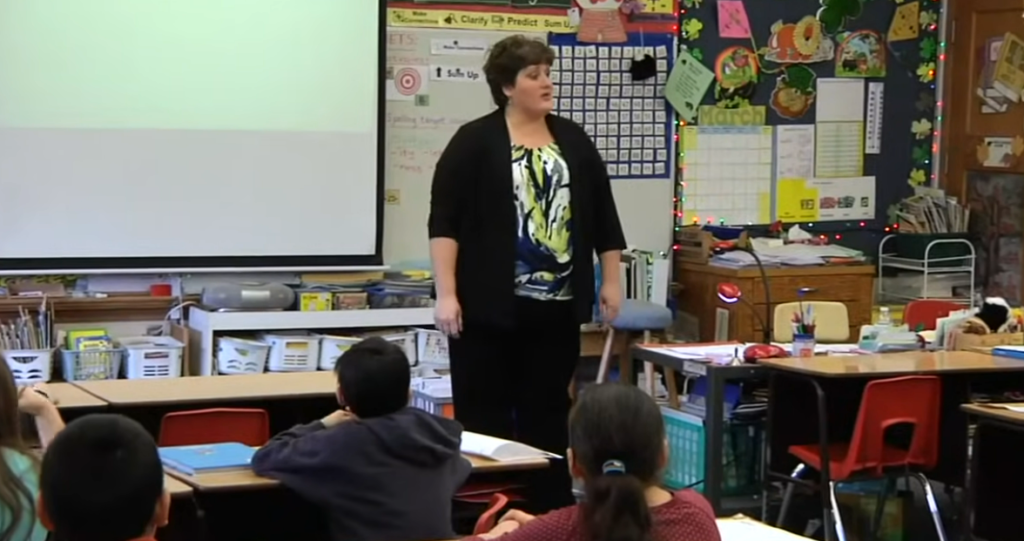

Teacher Kale Blackshear led the DREAM lesson, which was created by Lego Education. Blackshear put it this way: “The school’s job is to get ahead of it rather than pretend otherwise because AI is going to be part of these children’s lives.” Seventy-eight percent of the school’s teaching staff had already used AI tools for planning and preparation by the time the lesson started. The administration was not attempting to outlaw a technology that students were already using at home, on their phones, or on the school-provided devices that are now available in the majority of American classrooms starting in kindergarten. Teaching kids to use the tool without becoming reliant on it was a more difficult objective.

Karter Nieves, another student, brought up the issue of cheating with a clarity that suggests he had witnessed it firsthand. “A lot of people use AI to cheat on their essays or tests,” he stated. “Sometimes people use it, and they copy and paste.” He was eight years old. He had already identified the pattern, recognized it as a problem, and given it a name. Working on a different school project, a different group of third-graders created their own AI system called “Growth Spurt” and imposed stringent controls on it, preventing it from playing games or providing direct answers. It turns out that kids can write guardrails that are at least as intelligent as the adults who have been discussing the same issues for two years.

The timing is not coincidental. In late March, the Education Department of New York City published preliminary AI guidelines, which are a traffic-light framework with green for authorized uses, red for prohibited ones, and yellow for situations requiring teacher judgment. Finalized regulations had not yet been released by the chancellor. Parents for AI Caution, a parent organization, advocated for a total halt to the use of AI in classrooms. Education researchers were split; some cited encouraging preliminary findings from specific AI tutoring applications, while others, such as Wayne Holmes, a professor at University College London, pointed out that there is currently no large-scale independent evidence regarding the efficacy or safety of these tools. Both the debate and the uncertainty are real.

A class of third-graders carrying markers and poster paper entered that gap without waiting. That’s encouraging and a little unsettling at the same time. It is encouraging because it implies that children can think critically about technology in ways that adults sometimes fail to acknowledge when they are given the freedom and space to do so. The fact that eight-year-olds are drafting AI policy in a Harlem charter school while the city’s education department is still gathering input is unsettling because it shows how quickly institutions advance in relation to the rate at which technology enters classrooms.

In a statement, co-CEO of DREAM Eve Colavito put it bluntly: “Waiting for research risks leaving students to figure this out on their own, without guidance.” That might be precisely correct. It’s also possible that the kids setting cautious boundaries around a technology they hardly understand are more exposed and wiser than any of the adults in the room fully realize, and that moving faster than the evidence supports introduces its own risks.

At the very least, the East Harlem experiment provided a different kind of beginning, one in which the policy’s most impacted individuals participated in its creation. Education policy typically doesn’t operate like that. Perhaps it’s worthwhile to ask why.

Disclaimer

Nothing published on Creative Learning Guild — including news articles, legal news, lawsuit summaries, settlement guides, legal analysis, financial commentary, expert opinion, educational content, or any other material — constitutes legal advice, financial advice, investment advice, or professional counsel of any kind. All content on this website is provided strictly for informational, educational, and news reporting purposes only. Consult your legal or financial advisor before taking any step.