Microsoft’s ambitious goal of creating the best reliable AI on the planet is driven more by conviction than by competition. The corporation is developing a technology framework that aims to make artificial intelligence both extraordinarily powerful and profoundly human, under the strategic direction of Satya Nadella and the forward-thinking vision of Mustafa Suleyman. In addition to massive capital expenditures, the journey is influenced by the unwavering conviction that innovation should benefit humanity rather than rule it.

The scope and goal of Microsoft’s AI initiative characterize it. In order to build AI-enabled data centers around the US and abroad, the corporation has committed more than $80 billion. According to Nadella, these “AI superfactories” distribute computational energy to train ever-more complex models, acting as the digital equivalent of power grids. Microsoft has established a massive infrastructure that can support both its proprietary systems and its long-standing cooperation with OpenAI by linking these centers via a specialized AI-wide network. In order to guarantee that every gigawatt of compute produces the most beneficial output feasible, reliability is more important than speed.

Microsoft stands out from its competitors thanks to Mustafa Suleyman’s Humanist Superintelligence concept, which aims to rethink the way advanced artificial intelligence is thought of and developed. Microsoft is concentrating on developing “bounded intelligence,” which is intended to address concrete issues without undermining human agency, as opposed to pursuing unbridled autonomy. It is “a system that is strikingly similar to human reasoning, yet permanently grounded in our values,” according to Suleyman. This AI theory views accountability, control, and empathy as essential components of intelligence.

Bio & Background

| Category | Details |

|---|---|

| Name | Mustafa Suleyman |

| Position | CEO of Microsoft AI; Co-Founder of DeepMind |

| Education | Oxford University, Philosophy, Politics, and Economics |

| Notable Role | Leading Microsoft’s Humanist Superintelligence initiative |

| Professional Focus | Building safe, human-centered AI systems |

| Career Highlights | Co-founded DeepMind (acquired by Google); led AI ethics efforts at Google DeepMind before joining Microsoft |

| Reference | https://microsoft.ai/news/towards-humanist-superintelligence |

Microsoft wants to combat the growing apprehension about generative AI systems by highlighting this humane approach. The company’s vision advocates for what Suleyman refers to as “practical intelligence with purpose,” rejecting both alarmism and naïve optimism. This approach is a stark contrast to the narrative of unbridled progress that is typically associated with Silicon Valley. The goal of Microsoft’s AI is to empower mankind, not to outshine it.

According to Microsoft President Brad Smith, artificial intelligence is “the next general-purpose technology,” comparable to electricity and microchips. His analogy seems appropriate. Similar to how electrification changed society, artificial intelligence (AI) has the potential to completely transform healthcare, education, and productivity. However, Smith contends that trust is the true obstacle. He asserts that the business that gains international trust will have a greater influence on the future than the one that just wins the race. This way of thinking illustrates how Microsoft’s AI ambition combines moral responsibility with engineering prowess.

Education and inclusivity are important components of Microsoft’s trust-building strategy. By collaborating with community institutions and nonprofits to reach underserved areas, the business intends to teach 2.5 million Americans in AI-related skills. Microsoft is fostering an ecosystem that feels inclusive rather than exclusive by expanding access to AI expertise. This endeavor is extremely successful in coordinating technology advancement with social advancement, proving that empowerment is the first step toward building trust.

Suleyman takes an equally thorough approach to AI safety. He supports ongoing alignment—a dynamic framework that guarantees AI systems continue to be moral, open, and safe over time. He clarifies, “We can’t align AI once and forget it.” “It has to be in perfect alignment forever.” This viewpoint reinterprets safety as a live practice—a continuous conversation between people and their creations—rather than as a limitation. This kind of thinking is similar to the iterative process of software development, when diligence takes the place of complacency.

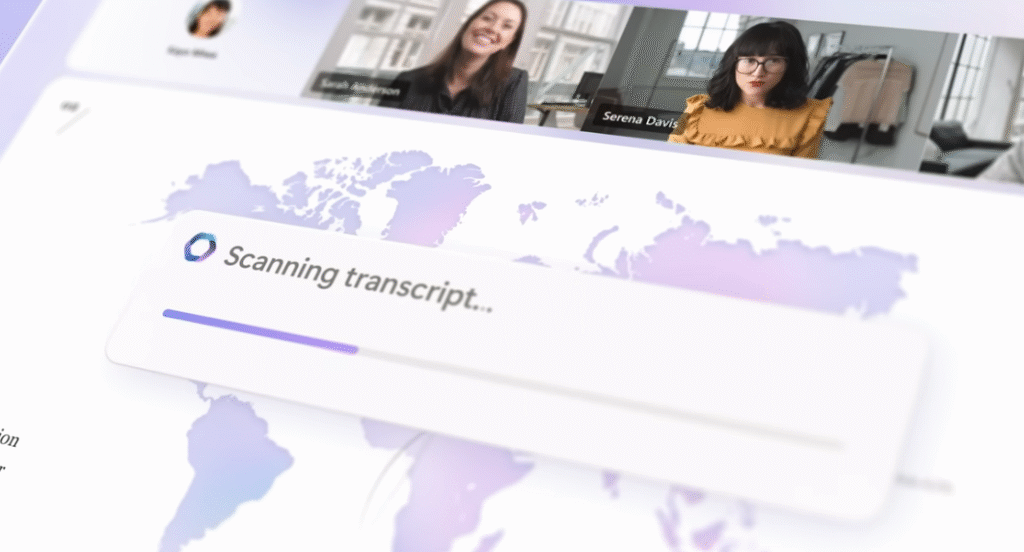

The new Superintelligence Team within Microsoft’s AI division is an example of this way of thinking. It was established especially to create Humanist Superintelligence and consists of a team of engineers, researchers, and ethicists who are trying to create intelligent and interpretable models. Their techniques include incorporating dynamic monitoring tools, hallucination correction systems, and content-safety layers into AI platforms like Azure. These technological precautions are especially helpful in resolving one of the most enduring problems with generative AI: believability.

AI is positioned as a foundational driver across sectors in Microsoft’s larger industrial strategy. Suleyman sees one of the first and most significant uses of medical superintelligence. With diagnostic accuracy rates much better than many clinical baselines, the company’s MAI-DxO model provides a window into a future in which healthcare is both incredibly efficient and widely available. Suleyman explains, “The goal is to make expert-level healthcare available everywhere, not to replace doctors.”

Simultaneously, AI may play a revolutionary role in energy innovation. According to Suleyman, innovations powered by AI will provide abundant, inexpensive, and clean energy by 2040. This optimism is based on Microsoft’s continuous research into grid intelligence, renewable storage, and AI-optimized materials, so it’s not wishful thinking. AI can lower waste, save expenses, and speed up sustainability initiatives by using vast amounts of data to forecast and manage energy flows. In a time when climate urgency is paramount, this objective feels especially novel.

Notably, Microsoft’s change has a collaborative tone. The corporation sees AI as a communal tool—a platform for collective progress—instead of claiming it as a private empire. The necessity of cooperation between governments, academic institutions, and private businesses has been underlined by Nadella on numerous occasions. He recently stated, “This is about amplifying humanity, not automating it.” His words encapsulate the company’s changing culture, which is ambitious but remarkably grounded.

As Google, Anthropic, and Meta pursue their own conceptions of safe intelligence, competition is still intense. However, Microsoft’s emphasis on containment and transparency has given them a more unique reputation. It has been able to preserve both innovation and integrity by being transparent about research publications and aggressively interacting with regulators. Microsoft’s reticence reads as confidence rather than caution in a world full of hype.

Disclaimer

Nothing published on Creative Learning Guild — including news articles, legal news, lawsuit summaries, settlement guides, legal analysis, financial commentary, expert opinion, educational content, or any other material — constitutes legal advice, financial advice, investment advice, or professional counsel of any kind. All content on this website is provided strictly for informational, educational, and news reporting purposes only. Consult your legal or financial advisor before taking any step.