An AI scribe had been adopted by the clinic. Real-time listening, transcription, keyword highlighting, diagnostic possibility flagging, and billing code generation were all done by it. The doctor, apparently satisfied that the system had captured enough of what the patient was saying, turned away from her mid-sentence to review the text on the screen. The summary was accurate and fluid when the patient’s accompanying physician-anthropologist requested to view the AI-generated note after the visit. However, it failed to convey the catch in her voice when she brought up the stairs. When she hinted that she had begun to avoid them, it failed to capture the brief moment of fear. The interaction had been recorded by the machine. It hadn’t seen it.

That gap — between what an algorithm captures and what actually matters in a medical interaction — is at the center of one of the most consequential and least regulated shifts in American healthcare. Roughly 81 percent of clinicians now use some form of AI. Two-thirds of American physicians used it as part of their practice in 2024, a 78 percent jump from the year before. An AI system from OpenEvidence recently became the first to score 100 percent on the United States Medical Licensing Exam. Research shows AI can read radiologic images with accuracy rivaling human specialists, detect skin cancers from smartphone photographs, and flag early signs of sepsis faster than clinical teams. The technology is genuinely impressive. The governance of it is not.

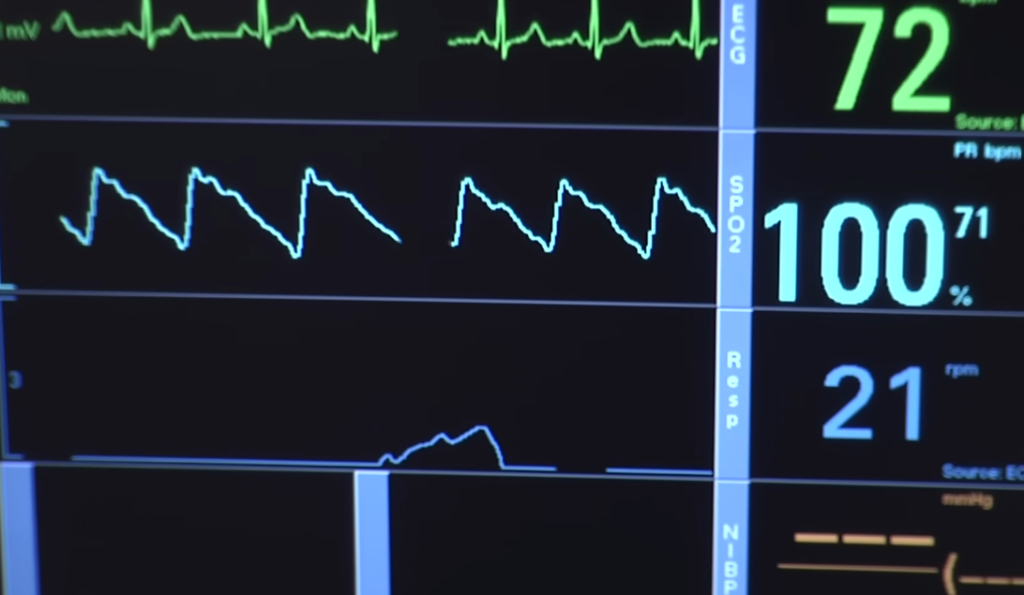

The most tangible harm is already building up in the bias issue. A U.S. algorithm designed to predict which patients might need increased future care concluded that Black patients were healthier than equally sick white patients — not because they were, but because they accessed care less frequently, and the system coded lower care utilization as lower disease burden. The bias was structural, unintentional, and consequential: it effectively redirected resources away from Black patients. Pulse oximeters, which systematically underestimate hypoxemia in people with darker skin, fed into triage algorithms during the pandemic, delaying care for patients whose oxygen levels the sensors were quietly misreading. Race-based corrections in kidney function calculations influenced transplant eligibility for years before the practice was revised. Once a bias is embedded in a medical protocol, it tends to persist — not because anyone defends it, but because no one is systematically looking.

| Key Information | Details |

|---|---|

| AI Adoption Rate | ~81% of clinicians use some form of AI; two-thirds of American physicians used AI in practice in 2024 — a 78% jump from the prior year |

| Diagnostic Capability | An AI system from OpenEvidence became the first to score 100% on the United States Medical Licensing Exam (2025) |

| Bias Example | A U.S. algorithm designed to predict patient care needs concluded Black patients were healthier than equally sick white patients because Black patients accessed care less frequently — effectively denying resources to Black patients |

| Insurance Denial Abuse | UnitedHealth used AI to reject rehabilitation claims for elderly patients; Cigna used automated systems to deny thousands of claims in seconds with no physician review |

| AMA Finding | Over 60% of doctors report unregulated AI tools systematically deny patients coverage for necessary care |

| “Lazy Doctor” Risk | Clinical deskilling — AI over-reliance leads to atrophy of physicians’ reasoning skills and independent clinical judgment |

| Regulatory Status | EU AI Act high-risk healthcare obligations set for full application August 2026; FDA advancing Total Product Lifecycle frameworks; 95% of generative AI pilots at companies currently failing (MIT report, 2025) |

| Black Box Problem | Many AI diagnostic systems are opaque — developers, clinicians, and patients cannot determine how the algorithm reached a diagnosis |

| Accountability Vacuum | When AI causes a wrong diagnosis leading to patient harm, it is often legally unclear whether liability falls on the developer, hospital, or clinician |

| Reference Links | The Guardian — What We Lose When We Surrender Care to Algorithms / NIH/PMC — Artificial Intelligence and Predictive Algorithms in Medicine |

The accountability question is where the legal landscape gets genuinely murky. When an AI-generated recommendation leads to patient harm, the question of who bears responsibility — the developer who trained the model, the hospital that deployed it, the physician who acted on it — has no settled answer. This is not a hypothetical. Inaccurate algorithms can affect enormous numbers of patients simultaneously, in ways that a single physician’s error never could. The opacity of many systems makes accountability harder still. The so-called “black box” problem means that neither clinicians nor patients can determine how a given diagnosis was reached, which makes challenging it — legally or clinically — nearly impossible. There’s a sense that the industry moved fast and built a complicated tangle of liability that nobody has fully untangled yet.

The insurance industry’s use of AI may be the clearest current example of the technology being deployed in ways that directly harm patients. UnitedHealth used AI-driven predictive analytics to reject rehabilitation claims for elderly patients deemed unlikely to recover quickly enough. Without a doctor ever reviewing the individual cases, Cigna used automated review systems to reject thousands of claims in a matter of seconds. According to research by the American Medical Association, more than 60% of doctors claim that unregulated AI tools routinely deny patients coverage for essential medical care. These are not examples of edge cases. They are the system operating at scale, effectively, and with little human intervention.

Then there’s the more subdued danger known as clinical deskilling, or what some studies refer to as the “lazy doctor” effect. Physicians’ own reasoning process tends to shorten when an algorithm suggests a diagnosis. The ability to make independent clinical decisions, such as recognizing what doesn’t fit, challenging the obvious solution, and reading the patient instead of the chart, atrophies over time. Research indicates that incorporating a human physician into an AI’s diagnostic process can actually decrease accuracy in certain situations. This may seem like a case for complete automation, but it more realistically illustrates the extent to which an excessive dependence on algorithmic recommendation diminishes the physician’s personal contribution. The doctor learns to postpone thanks to the machine.

Additionally, the patient side of this equation is changing in ways that are noteworthy. A young woman who had spent the week prior to her appointment practicing her medical narrative with ChatGPT was described by a psychiatrist in the United States. She refined her descriptions until she was using clinical language that the app had fed back to her, which was precise, medical, and mostly devoid of personal history or affect. Her sincere doubts about her personal experience had been removed. She borrowed phrases when she talked to her doctor. The pain was present; its texture had been converted into a form that the machine would accept. According to the psychiatrist, her story was polished, prepared for delivery to insurance companies and pharmacists, and there was a considerable chance that her care would be directed toward the formatted version of her experience rather than the real one.

Finally, regulatory frameworks are starting to catch up. Beginning in August 2026, the EU AI Act will impose high-risk requirements on AI medical devices, including bias monitoring and data governance guidelines. Frameworks for adaptive AI systems that gradually update their algorithms are being developed by the FDA. These are significant actions. Additionally, they are coming after the technology has already become deeply ingrained in patient habits, billing systems, and clinical workflows. 95% of generative AI pilots at businesses are failing in practice, according to a 2025 MIT study. This finding warrants more attention in the healthcare industry than it has gotten.

It’s difficult to ignore how recognizable this pattern is. A truly capable technology enters a high-stakes, complex field before anyone can adequately evaluate its risks. Institutions that prioritize efficiency and early adopters benefit. Patients who don’t know what they’re consenting to, clinicians who weren’t trained to assess what they’re using, and communities whose data was initially underrepresented in training sets are among those who suffer the most from the unequal distribution of harms. Whether it’s a technical issue to be solved or a human connection to be safeguarded, the question of what medicine is for is usually settled in a way that benefits the person in charge of the infrastructure. As the doctor reads the screen, the patient in the examination room isn’t still speaking.