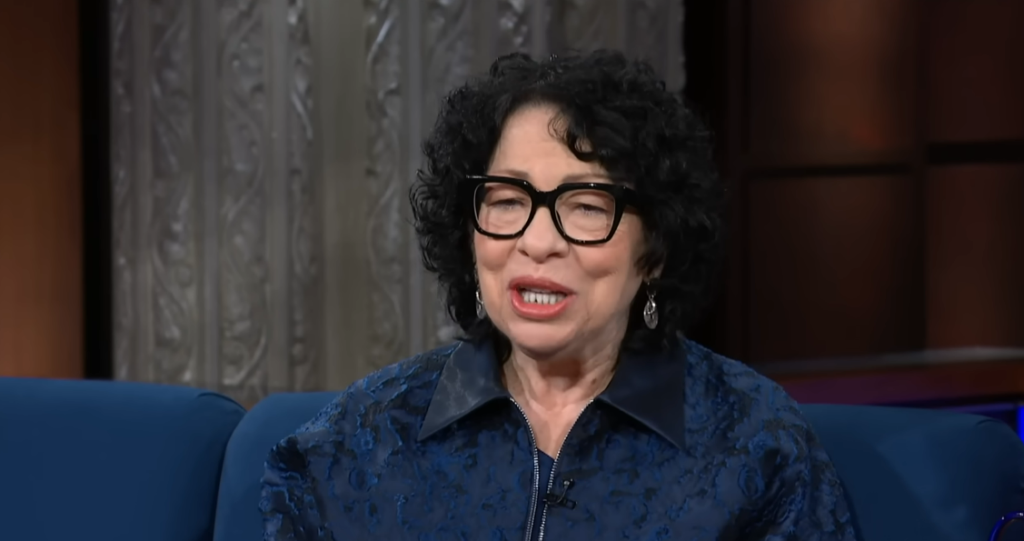

Standing in front of a room full of Tuscaloosa law students and acknowledging, in essence, that the Court she sits on might not be doing enough creative thinking is almost refreshingly honest. That was the implication of Justice Sonia Sotomayor’s remarks on April 9 at the University of Alabama School of Law, where she talked about an AI system that a colleague had told her about. The system was intended to forecast the Supreme Court’s decision-making process, and it had a remarkably high success rate. Her response was straightforward. She described it as “a very bad thing.” It demonstrated, in her opinion, that the Court was “way too predictable.” She questioned out loud whether the justices were sufficiently deviating from their typical thought patterns and exposing themselves to truly original concepts. It was an uncommon moment of introspection from a person whose votes are among the data points that the AI is presumably examining.

Almost immediately, the observation provoked criticism, and not all of it was unjust. One counterargument is that predictability is a strength rather than a weakness in a court of law. Markets can operate, businesses can plan, citizens can comprehend their rights, and the entire downstream legal system can function somewhat coherently when judicial reasoning is stable. The Supreme Court would not be a more intellectually active institution if it consistently shocked everyone. It would be a disorderly one. If AI can accurately predict the Supreme Court, there is a plausible argument that this demonstrates the kind of principled consistency that courts are expected to exhibit. It is confusing to confuse Sotomayor’s worry that the Court might be too set in its ways with the question of whether predictability is desirable in and of itself.

| Category | Details |

|---|---|

| Person | Justice Sonia Sotomayor |

| Position | Associate Justice, U.S. Supreme Court |

| Appointed | 2009 |

| Education | B.A. Princeton (1976), J.D. Yale (1979) |

| Age | 71 |

| Event | Talk at University of Alabama School of Law, April 9, 2026 |

| Key Quote | “It’s a very bad thing. It shows we’re way too predictable.” |

| Context | AI systems predicting SCOTUS outcomes with high accuracy |

| Field Referenced | Legal Judgment Prediction (LJP) |

| First SCOTUS Case | West v. Barnes, 1791 |

| AI Techniques Used in LJP | Machine learning (Decision Trees, SVM, Random Forest, LLMs) |

| Broader Issue | Recusal questions, judicial bias, AI and legal personhood |

| Her Advice to Law Students | “Do not graduate without learning how to master AI as a tool” |

However, her intuition that something is wrong should be given more weight than the quick denial does. From early expert surveys to statistically based models to the current generation of large language models that analyze natural language from oral arguments, briefs, and previous opinions, the field of legal judgment prediction has been developing for decades. These contemporary tools have genuinely high success rates. The ability of an AI to read the transcript of the oral argument and accurately predict each justice’s vote prior to the announcement of the decision raises at least a modest question about whether the deliberative process is truly accomplishing its goals, whether new arguments are being made, and whether the written briefs are having any real impact. Even though Sotomayor’s framing conflates two distinct issues, she is pointing to something genuine.

Her observation that AI is trained on human output and thus has “the potential to perpetuate the very best in us and the very worst in us” is the part of her remarks that received a little less attention but merits more. This is not a new realization—AI ethicists have been concerned about it for years—but it has particular significance when stated by someone who frequently receives briefs that are partially generated by AI tools. Algorithmic pattern-matching on past rulings has particularly concerning implications in the legal system. The feedback loop between AI prediction and human decision-making becomes genuinely difficult to separate if models learn to predict outcomes based on the parties’ identities, the questions that judges ask, the way cases are framed, and if lawyers begin tailoring their arguments accordingly.

In contrast to the tone of her earlier criticism of AI, Sotomayor also advised the students in the room to learn how to use AI as a tool before leaving law school. This advice was very practical. In fact, both positions make sense and work well together. You may think that attorneys need to be knowledgeable about and proficient with AI, but you may also be concerned about what will happen when AI’s predictive ability begins to influence the very behavior that it was trained to observe. The issue of recusal is a distinct thread in and of itself: once justices start speaking publicly about AI, those statements become part of the record, and when AI cases eventually make it to the Supreme Court, the question of whether those earlier comments amount to prejudice will inevitably come up.

Observing this discussion on social media and in legal academia, it seems that the most crucial sentence According to Sotomayor, prediction rates were not the issue in Tuscaloosa. It was the one that implied the Court might not be being sufficiently receptive. It’s more difficult to say that. and a more difficult issue to resolve.

Disclaimer

Nothing published on Creative Learning Guild — including news articles, legal news, lawsuit summaries, settlement guides, legal analysis, financial commentary, expert opinion, educational content, or any other material — constitutes legal advice, financial advice, investment advice, or professional counsel of any kind. All content on this website is provided strictly for informational, educational, and news reporting purposes only. Consult your legal or financial advisor before taking any step.