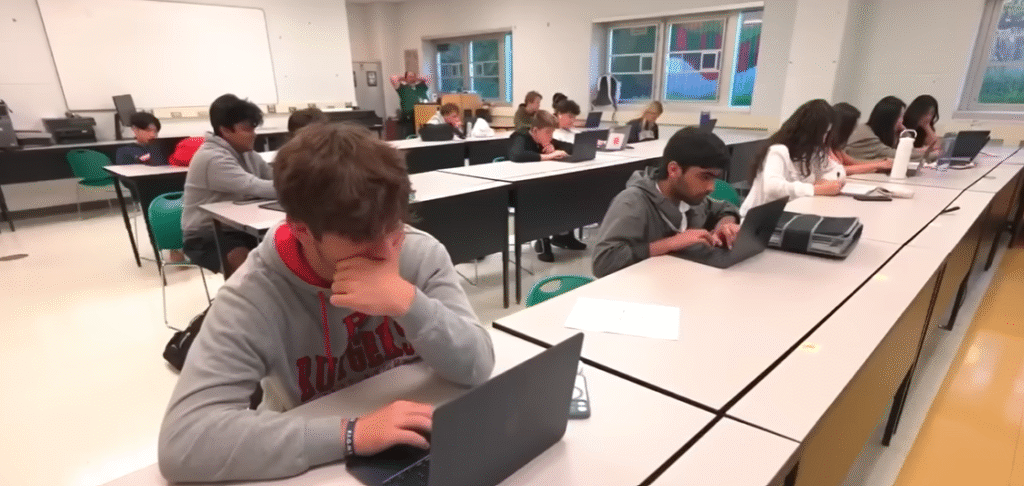

What it means to learn and to teach has changed as artificial intelligence has crept into education like a gentle tide rather than a storm. The classroom, which was formerly characterized by blackboards and human intuition, is now alive with algorithms that can grade essays, predict performance, and read emotions. Although this change is incredibly successful in increasing access, its moral implications are extremely nuanced.

Students are now considered data points since every click, response, and pause is now captured. Teachers are torn between creativity and reflection; they are excited to see what AI can do but fear its repercussions. It’s eerily reminiscent of an artist viewing a robot’s sketch of a masterpiece and wondering who really owns it.

The issue of academic integrity is among the most urgent. Authenticity is nearly impossible to gauge when students can produce perfect essays or code snippets in a matter of seconds. Teachers say it’s like trying to catch smoke: the content of learning doesn’t change, but the form does. The ethical question remains: is using AI assistance a form of cheating or just the next step in human creativity? Schools are experimenting with AI-detection tools.

The temptation is especially great for students. When a machine can summarize research in seconds, why struggle to write? However, the loss of effort and curiosity is a price paid for this convenience. Assignments must now be created by teachers to assess creativity and reflection rather than memorization. The lesson has changed; now, demonstrating sincere thought is more important than coming up with the right answers.

| Category | Details |

|---|---|

| Key Figure | Selin Akgun |

| Profession | Researcher and Educator |

| Institution | Michigan State University |

| Field of Expertise | Artificial Intelligence in Education, AI Ethics |

| Notable Work | Artificial Intelligence in Education: Addressing Ethical Challenges in K-12 Settings |

| Research Focus | AI applications in K–12 education, ethical challenges, data privacy, and algorithmic bias |

| Collaboration | Christine Greenhow, Co-researcher and AI Education Scholar |

| Cited Publication | National Institutes of Health (NIH) – PMC Article on AI Ethics |

Another unseen danger that is subtly ingrained in AI’s design is bias. Historical inequalities are frequently reproduced by algorithms trained on historical data. They reflect the same shortcomings that society has been working to address for decades. Predictive systems frequently give preference to students who are already privileged when determining which students are “likely to succeed.” While a student from a less privileged background may be unfairly scrutinized, a student from an elite background may be labeled as promising.

Ironically, a technology that is praised for its neutrality frequently exhibits covert bias. An instructive example is still the 2020 Ofqual algorithm in England. The system ultimately rewarded students from wealthier schools while penalizing those from working-class schools in an attempt to standardize grades. The message was very clear, and there was a lot of backlash: ethics cannot be neglected when judgment is replaced by automation.

The moral map is further complicated by the privacy issue. Learning platforms collect vast amounts of data, including reading speeds, test scores, and even facial expressions. Though consent is rarely fully understood, a large portion of this data is used to improve AI performance. Without realizing it, students click “agree,” opening a window into their academic minds. In certain respects, the promise of personalization has turned into a deal for surveillance.

Teachers are especially worried about the potential use of student data outside of the school. Does the learner still own the data if a business uses millions of student interactions to create an algorithm? Ownership ethics are ambiguous. An old question is being echoed digitally: who gains when knowledge turns into money?

The late Stephen Hawking once cautioned that, if unchecked, building intelligent machines could be humanity’s greatest triumph and its biggest error. That caution has a strong educational resonance. Although many educators are excited to use AI tools, they are still unsure of how to use them properly. There is frequently a lack of transparency. Few people comprehend the reasoning behind an AI’s performance predictions or essay grading. Shared but diluted responsibility results.

In terms of administrative efficiency, AI has proven especially helpful. AI assistants like “Jill Watson” manage grading and respond to student inquiries 24/7 at universities like Georgia Tech. Response times and workload stress have significantly improved thanks to the system. However, some students felt duped when they learned that their “teacher” was a machine. Once the lifeblood of education, trust has been reduced to a code.

In the meantime, AI tutors have been widely adopted in nations like China. For example, SquirrelAi provides adaptive platforms that monitor facial cues and instantly modify difficulty for millions of students. Although extremely effective, the method is quite invasive. It runs the risk of transforming education into surveillance, even though it promises equality by reaching distant learners. Never before has the delicate balance between autonomy and access been so precarious.

Some organizations are taking a morally upright stance in the face of this conflict. For young students, MIT’s Media Lab has implemented ethics-based curricula that teach them how algorithms think and how to think critically themselves. Students are asked to create the algorithm for the “best” peanut butter sandwich in one lighthearted yet thought-provoking exercise, which reveals that even recipes are biased. The activity gives concrete form to an abstract truth: the values of its creators are reflected in technology.

This message is echoed by the “AI for Oceans” project from Code.org. Students get a firsthand look at how biased data leads to flawed results by teaching AI to distinguish between trash and fish. It serves as a gentle introduction to the more profound reality that justice is designed rather than instinctive. It is encouraging to know that these programs are assisting a generation in becoming well-versed in both conscience and coding.

This change in education is especially novel because it incorporates moral reflection into technical training. These courses make ethics fundamental rather than an afterthought. Students are taught to view algorithms as human creations, capable of genius but prone to flaws, rather than as mystical forces.

Frameworks for “ethical AI in education” are becoming more popular in think tanks and universities. Initiatives from UNESCO prioritize openness, human supervision, and privacy protection. They contend that rather than being a substitute, technology ought to be a partner. It’s a perspective that seems necessary and hopeful. AI has the potential to boost inclusivity and creativity, but only if it is used with conscience rather than convenience.